In Short

By using fast, focused research and rapid prototyping, we identified how consumers skim and interpret large volumes of reviews. This led to the launch of Popular Mentions a clearer, more scannable way to surface recurring themes. The feature quickly proved valuable, helping users compare feedback faster and make more confident purchase decisions.

Millions of consumers use Trustpilot to navigate countless opinions and make informed choices. This project shows how fast research helped uncover ways to give users a clearer overview of many reviews, without overwhelming them.

Goal: Quickly identify the most promising product directions to help consumers easily digest and summarise multiple reviews to make a purchase decision.

Problem: Across a large number of user interviews, we noticed a recurring pattern: people often said it was hard to grasp the essence of a large number of reviews at a glance. They wanted a quicker way to understand what others were saying without reading every individual review.

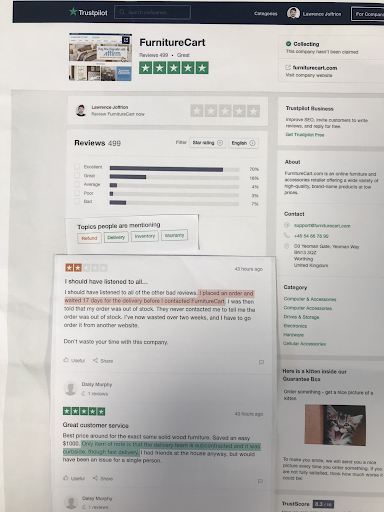

Research: Using fast, focused research methods, I explored how users navigate large sets of reviews. Short interviews and behavior analysis revealed that people often skim for patterns rather than read full reviews. This insight shaped design directions toward clearer overviews and visual summaries.

We explored three different ideas to help users understand review content efficiently:

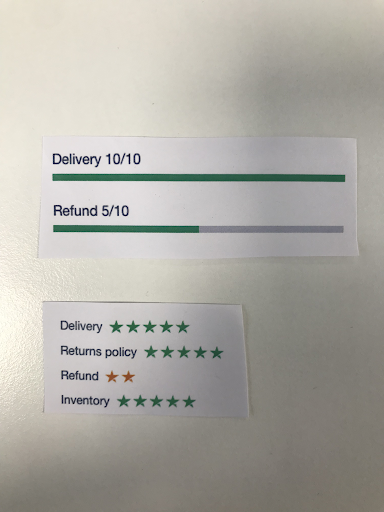

- Service Attributes

- Showing Sentiment

- Topics / Popular Mentions

Method

I ran 10 exploratory semi-structured in-person interviews with participants from five different demographics.

To move quick and make rearranging elements easy I chose to use a paper prototype, I tested each concept to uncover:

- Which concept resonates most with users

- What feels logical and expected

- What concerns or hesitations arise

- How people interpret and use these elements

Key Learnings

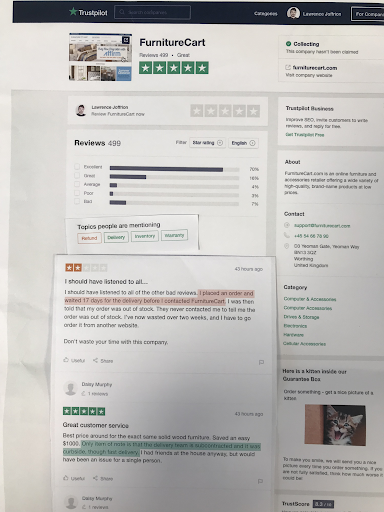

- Topics: Users assumed the topic buttons linked to company information (e.g., “refund” or “warranty”), rather than aggregated review insights.

- Overall understanding: Participants didn’t differentiate between data sources (user-generated attributes, filtered topics, or sentiment). Value depended more on clarity and usefulness than data origin.

- Quotes from reviews: Provided little perceived value; participants questioned their authenticity and relevance.

- Numbers: Worked best when easy to process and visually significant — e.g., “22 of 499 reviews mention…” felt more trustworthy than smaller figures.

- Context: Users needed more context to interpret sentiment — vague labels like “excellent” didn’t provide enough meaning.

- Sentiment highlights: Seen as both useful (“cuts through the fluff”) and intrusive (“telling you what to read”). The concept was promising but required visual refinement.

Conclusion

This fast-paced project showed how quick, targeted research can unlock valuable insights in a short time. The results informed early design directions for a clearer, more scannable review experience and paved the way for releasing informed experiments.

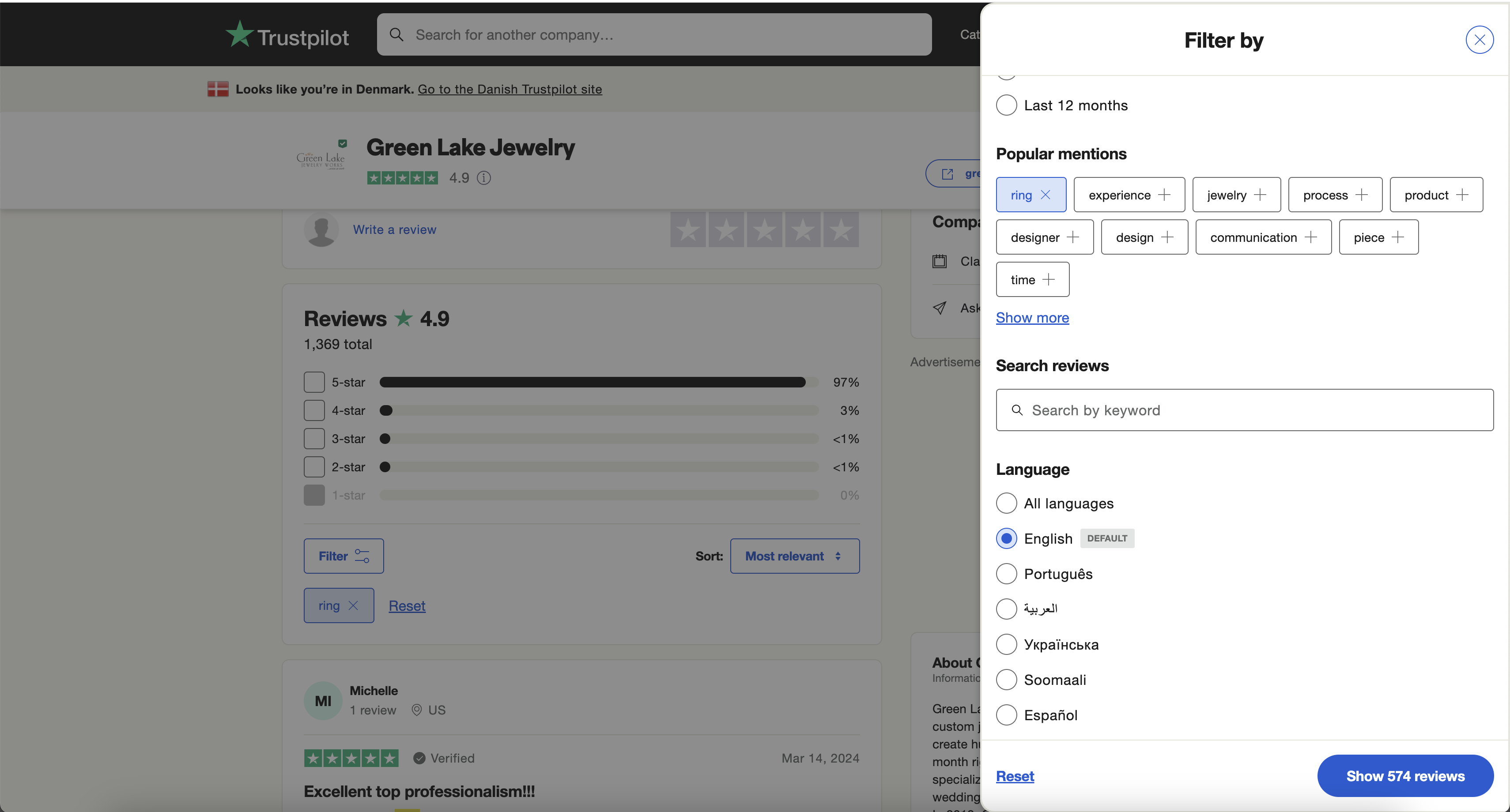

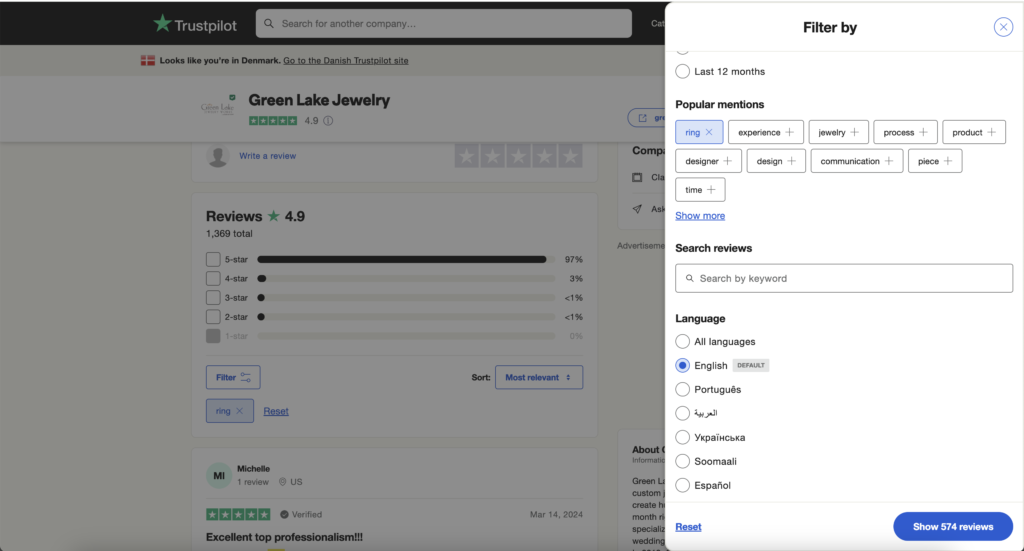

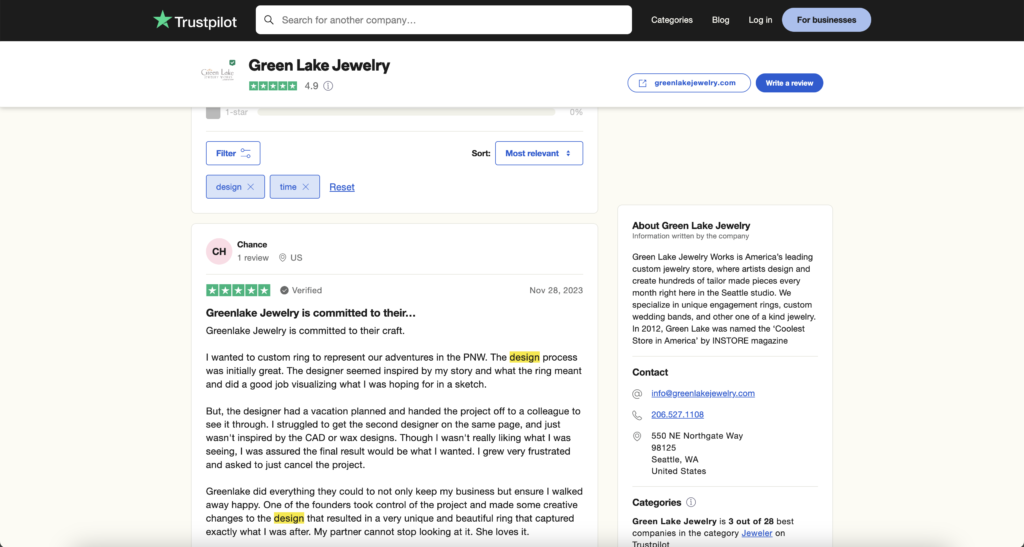

Based on these insights and further testing, we developed and launched “Popular Mentions”, a feature that allows users to quickly see which topics are most frequently discussed in reviews.

Since launch, the feature has been continuously tracked and consistently proves to be a significant benefit to users, helping them navigate and compare reviews with greater ease and confidence.